Fred and I discuss Enterprise Mode on the Microsoft Edge blog:

Helping our Enterprise customers stay up-to-date with the latest version of the browser is a top priority for Microsoft. This is particularly important for Windows 7 customers who should upgrade to Internet Explorer 11 by January 12, 2016 to continue receiving security updates and technical support. We understand many customers have web apps and services designed specifically for older versions of Internet Explorer, so we’re continuing to improve our set of Enterprise Mode tools to help you run those applications in Internet Explorer 11. Upgrading to Internet Explorer 11 can ease the upgrade to Windows 10, too, since Internet Explorer 11 is supported on Windows 7, Windows 8.1, and Windows 10.

Continuing to improve Enterprise Mode

We continue to make significant improvements to Enterprise Mode, helping customers upgrade more easily to Internet Explorer 11 while also extending the ability to run older web apps. Customer feedback has told us that we’re on the right track with these tools, and that IE upgrades are going more smoothly than ever before.

Here are some features and resources available for you today:

IE8 Enterprise Mode

IE8 Enterprise Mode provides a higher fidelity emulation of the Internet Explorer 8 browser by turning on functionality not available in other document modes. You can use the Enterprise Mode Site List Manager to specify any web path to load in this mode.

IE7 Enterprise Mode

Internet Explorer 8 shipped with Compatibility View, a compatibility option that looks for the DOCTYPE tag, rendering a web page in IE7 document mode if it exists, or in IE5 document mode if it doesn’t.

While IE11 still has Compatibility View, you can use IE7 Enterprise Mode to provide a higher fidelity emulation of how the IE8 browser acts when running in Compatibility View. You can use the Enterprise Mode Site List Manager to specify any web path to load in this mode.

IE5, IE7, IE8, IE9, IE10, and IE11 Document Modes

Internet Explorer 11 supports document modes for emulation of the IE5, IE7, IE8, IE9, IE10, and IE11 rendering engines. While site markup can request the browser to load in these modes, you can also use the Enterprise Mode Site List to override the site to load in any document mode that makes the site work.

Enterprise Site Discovery

If you want to prioritize your testing, you can use Enterprise Site Discovery with IE8, IE9, IE10, and IE11 to discover which web apps your users are visiting and how the apps are built, like what document mode or ActiveX Controls are used. The Enterprise Mode Site List Manager tool can even import this data to help you seed your Enterprise Mode Site List. Of course, using the F12 developer tools for manual testing is always an option, but Enterprise Site Discovery can help you quickly gather information from a broad set of users.

(New!) Support for HTTP ports in Enterprise Mode

We’ve also heard from many customers that they want the ability to apply Enterprise Mode compatibility features to sites with HTTP ports, such as http://contoso.com:8080, and we’ve listened: Enterprise Mode now supports HTTP ports. You can specify a HTTP port directly in your Enterprise Mode Site List XML, such as <domain>contoso.com:8080</domain>, or you can use the new Enterprise Mode Site List Manager tool to add HTTP ports. To use this feature on Windows 7 or Windows 8.1, you’ll need to also install the IE11 October Cumulative Update or later.

(New!) Web Application Compatibility Lab Kit

To help customers learn how to use Enterprise Mode and Enterprise Site Discovery, today we are introducing the Web Application Compatibility Lab Kit. This Lab Kit provides a walk-through of how to configure and set up Enterprise Mode in addition to Enterprise Site Discovery, the F12 developer tools, and the Enterprise Mode Site List Manager. The Lab Kit comes with VMs for Windows 7 SP1 Enterprise (Evaluation) and Windows 10 Enterprise (Evaluation), or you can download a “lite” version without the VMs.

For more information or assistance:

- Technical guidance, tools, and resources on Enterprise browsing

- Enterprise Mode for Internet Explorer 11

- Download Enterprise Mode Site List Manager tool for Windows 7 and Windows 8.1 Update

- Download Enterprise Mode Site List Manager tool for Windows 10

- Use the Enterprise Mode Site List Manager tool

- Collect data using Enterprise Site Discovery

- Web Application Compatibility Lab Kit

- Microsoft Services Support

- Find a Microsoft partner on Pinpoint

New improvements to Enterprise Mode in Windows 10

We’re also making it easier for customers to upgrade to Windows 10, because Windows 10 supports the same version of Internet Explorer 11—along with all of the Enterprise Mode features—available on Windows 7 and Windows 8.1. At the same time, we’ve continued to make improvements to Enterprise Mode in the Windows 10 Fall Update and higher to simplify management and better support Microsoft Edge. Many of these improvements will also be made available to Internet Explorer 11 on Windows 7 and Windows 8.1 early next year.

Microsoft Edge and IE11 work better together on Windows 10

Windows 10 features Microsoft Edge, a new browser that goes beyond browsing with features like Web Note and Cortana integration. Microsoft Edge is built from the ground up to improve productivity, to be more secure, and to correctly, quickly, and reliably render web pages. As we announced a few months ago, you can use Enterprise Mode with Microsoft Edge to open Internet Explorer 11 for your business’s sites that require IE proprietary technologies. This approach enables your users to run a modern browser designed for better productivity, security, and rendering web pages—without sacrificing compatibility with legacy line of business applications.

However, we also recognize that some customers have a significant number of sites that require IE proprietary technologies, and still need to use Internet Explorer 11 as their primary browser. For those customers, we have introduced a new capability in Enterprise Mode for Internet Explorer 11 to open Microsoft Edge for modern sites that need the latest web platform features. This feature has a very similar user experience to the analogous feature in Microsoft Edge to open IE11.

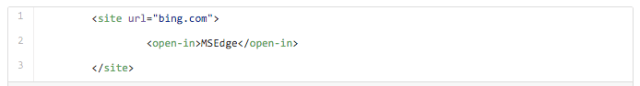

In this example below, we’ve setup Enterprise Mode with IE11 to open http://www.bing.com in Microsoft Edge.

With this change, customers now have the following options for browsers on Windows 10:

| Use Microsoft Edge as your primary browser | If your business sites primarily use modern web technologies, we recommend that you use Microsoft Edge as your primary browser. |

| Use Microsoft Edge as your primary browser and use Enterprise Mode to open sites in IE11 that use IE proprietary technologies | If your business sites primarily use modern web technologies but you still have older sites that use IE proprietary technologies, we recommend that you use Microsoft Edge as your primary browser and use the Enterprise Mode Site List to open older sites automatically in Internet Explorer 11. This approach helps ensure your new site development continues to happen on a modern web platform, but that you can still use your older apps that were designed for older versions of IE. Your Enterprise Mode Site List also becomes a to-do list for sites that you need to modernize. Many customers tell us they plan to use this approach. We use this option at Microsoft, since we still have some older applications built for older IE technologies. |

| Use Microsoft Edge as your primary browser and open all intranet sites in IE11 | If you have external sites that use modern web technologies, but still have a large number of internal business sites that use IE proprietary technologies, you can choose to use Microsoft Edge as your primary browser and open all intranet sites in IE11 using the Sends all intranet traffic over to Internet Explorer Microsoft Edge Group Policy or MDM setting. You can still use the Enterprise Mode Site List to ensure the internal sites that work with Microsoft Edge don’t open in IE11. This approach helps ensure your new site development continues to happen on a modern web platform, but balances it with your need to continue using older apps that were designed for older versions of IE. |

| Use IE11 as your primary browser and use Enterprise Mode to open sites in Microsoft Edge that use modern web technologies | If you have more business sites built on IE proprietary technologies than modern web technologies, you can choose to use IE11 as your primary browser and use the Enterprise Mode Site List to explicitly define the modern web sites that should open automatically in Microsoft Edge. This approach helps ensure your new site development continues to happen on a modern web platform engine in Microsoft Edge, while balancing the fact that most of your web apps are still based on IE. |

| Use IE11 as your primary browser | If you want the exact same environment on Windows 10 as on Windows 7 or Windows 8.1, you can choose to use IE11 as your primary browser. You can set IE11 as your default browser by using the Set a default associations configuration file in the File Manager Group Policy. While this is a great approach to quickly get to Windows 10, we recommend that customers consider using Microsoft Edge as a more secure, reliable browser instead. Developing new business applications using IE proprietary technologies could also make it harder for you to adopt modern web technologies in the future. |

Simpler, cleaner, and more scalable Enterprise Mode XML schema

We’ve heard that most customers are using the Enterprise Mode Site List to upgrade to IE11 on Windows 7, and will likely continue to use the site list going forward. We’ve also heard feedback that the existing site list XML schema isn’t always very easy to understand. Starting in the Windows 10 Fall Update, we’re now supporting a new v.2 Enterprise Mode XML schema, which is designed to be simpler, cleaner, more scalable, and to help ease list management. Internet Explorer 11 and Microsoft Edge on Windows 10 will continue to support the existing v.1 XML schema that is supported on Windows 7 and Windows 8.1 today, as well as the new v.2 XML schema.

Below is an example of an Enterprise Mode Site List based on the existing v.1 XML schema. Customer feedback included:

- It’s not clear that if the same entry appears in the emie and docMode sections, the emie section wins.

- The double negative of doNotTransition=”false” is confusing.

- There are many ways to put a site in a document mode: entry in emie section, entry in docMode section, or forceCompatView attribute.

- You have to break up an URL into the domain and paths separately for the emie and docMode sections.

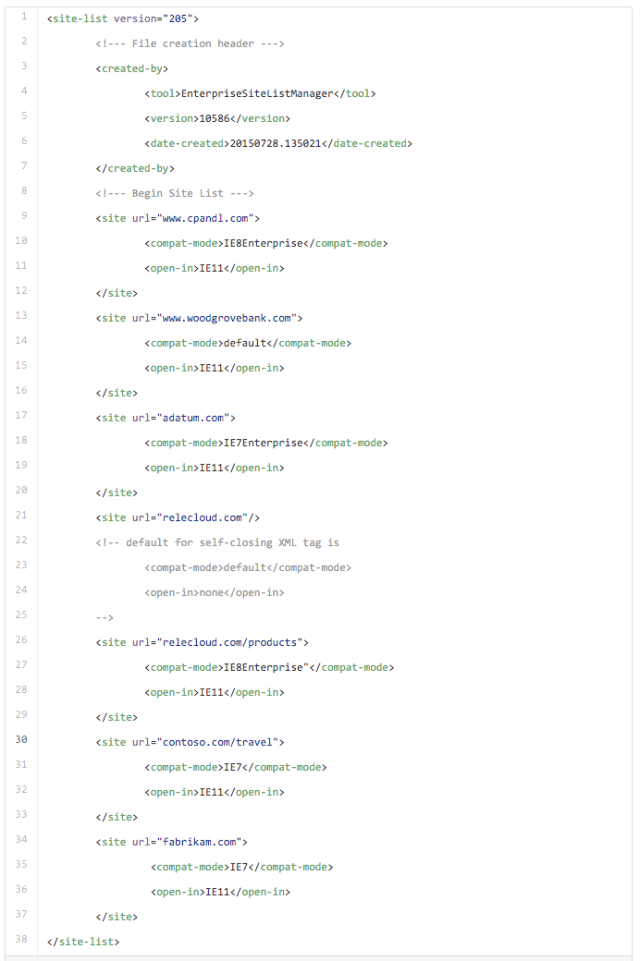

Below, we have the same Enterprise Mode Site List using the v.2 XML schema. Here are some of the changes:

- All entries are just URLs under the site element. This reduces the hierarchy and the need to split sites into domains and paths.

- We’ve introduced a new compat-mode element where you can specify any compatibility mode: IE8Enterprise, IE7Enterprise, IE5, IE7, IE8, IE9, IE10, IE11, and default. This attribute replaces the emie and docMode sections, and forceCompatView and exclude attributes.

- We’ve introduced a new open-in element where you can specify which browser the site should open in: IE11, MSEdge, or none (don’t open in another browser). This replaces the double negative of doNotTransition=”false”.

- You can use a self-closing element with no children to declare a site should just use the browser defaults. This replaces the exclude attribute.

- We include the date the list was created and the Enterprise Mode Site List Manager tool version number for easier site list version management. This information doesn’t impact how the browser interprets the site list.

- This format makes it very easy for us to introduce new Enterprise Mode features as children elements to the site element.

We encourage customers to adopt the easier to read and manage v.2 version of the XML schema, starting with Windows 10 and coming in early 2016 to IE11 on Windows 7 and Windows 8.1. Going forward, we plan to bring new features only to the v.2 XML schema. For example, the new Enterprise Mode feature for IE11 to open sites in Microsoft Edge is only supported with the new v.2 XML schema.

Here is an example of how you can specify that IE11 open a site in Microsoft Edge:

In addition to the new v.2 version of the XML schema, we’re also supporting a new version of the Enterprise Mode Site List Manager tool for Windows 10. This version of the tool lets you import an existing v.1 Enterprise Mode Site List XML file and automatically convert it to the v.2 XML schema.

Important note:

To avoid adding extra work to migrate from the v.1 to v.2 XML schema while customers are trying to upgrade to IE11 on Windows 7 and Windows 8.1 before the January 12, 2016 support policy deadline, we’ve decided to wait before supporting the v.2 XML schema on Windows 7 or Windows 8.1 today. We recommend customers working on IE11 upgrades on Windows 7 and Windows 8.1 continue to use the existing v.1 XML schema and the existing Enterprise Mode Site List Manager tool for Windows 7 and Windows 8.1 Update. The existing schema continues to be supported on all versions of Windows where IE11 is supported, including Windows 10. We will make the v.2 Enterprise Mode XML available in Internet Explorer 11 for Windows 7 and Windows 8.1 early next year, and customers shouldn’t consider moving to the v.2 XML schema until that patch lands on all of their devices.

To learn more about this Enterprise Mode XML schema changes, see the following:

- Enterprise Mode schema v.2 guidance for Windows 10-based devices

- Download Enterprise Mode Site List Manager tool for Windows 10

- Enterprise Mode schema v.1 guidance for Windows 7 and Windows 8.1 Update devices

- Download Enterprise Mode Site List Manager tool for Windows 7 and Windows 8.1 Update

Better diagnostics and management with about:compat

In the Windows 10 Fall Update, we’ve also introduced a new about:compat page in Microsoft Edge and Internet Explorer 11 to help customers better manage their Enterprise Mode Site List. You can use about:compat to see all of the compatibility features you or Microsoft have applied to sites on the client machine. This tool is not only an easy way to visualize and search the Enterprise Mode Site List, but a great way to diagnose problems on a client machine, like whether the latest Enterprise Mode Site List is being applied.

On Internet Explorer 11 for Windows 10, you can view and search the Enterprise Mode Site List, local additions to the Enterprise Mode list, the Microsoft Compatibility List, and local additions to Compatibility View.

On Microsoft Edge, you can view and search the Enterprise Mode Site List and Microsoft Compatibility List, as only those lists are applicable.

Upgrade guidance

Migrating to Internet Explorer 11 is easier than ever before, thanks to backward compatibility features like Enterprise Mode. Please start with the resources in this post, such as the Web Application Compatibility Lab Kit, to learn how to use Enterprise Mode and Enterprise Site Discovery effectively. If you still have a technical or business impediment that prevents you from upgrading, please reach out to your Microsoft account team or Microsoft partner, as we may be able to help.

We made these product improvements and upgrade resources based on customer feedback, and hope the new features help you manage browser compatibility more easily than ever before. Like other customers, you may also find that upgrading to Internet Explorer 11 with Enterprise Mode is easier and less costly than previous upgrades. Best of all, the upgrade to Internet Explorer 11 should help ease your migration to Windows 10 and Microsoft Edge.

– Jatinder Mann, Senior Program Manager Lead

– Fred Pullen, Product Marketing Director