In this IE Blog article I talk about how to use the Timing APIs to understand the real-world performance of your web applications.

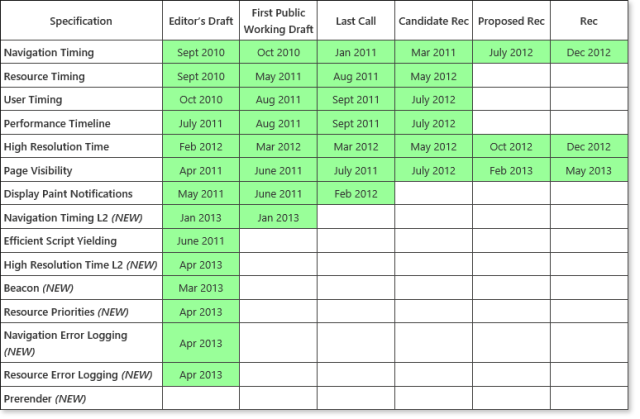

Together with Google, Mozilla, Microsoft, and other community leaders, the W3C Web Performance working group has standardized the Navigation Timing, Resource Timing, User Timing, and Performance Timeline interfaces to help you understand the real-world performance of navigating, fetching resources, and running scripts in your Web application. You can use these interfaces to capture and analyze how your real-world customers are experiencing your Web application, instead of relying on synthetic testing, which tests the performance of your application in an artificial environment. With this timing data, you can identify opportunities to improve the real-world performance of your Web applications. All of these interfaces are supported in IE11. Check out the Performance Timing Test Drive to see these interfaces in action.

The Performance Timing Test Drive lets you try out the Timing APIs.

Performance Timeline

The Performance Timeline specification has been published as a W3C Recommendation and is fully supported in IE11 and Chrome 30. Using this interface, you can get an end to end view of time spent during navigating, fetching resources, and executing scripts running in your application. This specification defines both the minimum attributes all performance metrics need to implement and the interfaces developers can use to retrieve any type of performance metric.

All performance metrics must support the following four attributes:

- name. This attribute stores a unique identifier for the performance metric. For example, for a resource, it will be the resolved URL of the resource.

- entryType. This attribute stores the type of performance metric. For example, a metric for a resource would be stored as “resource.”

- startTime. This attribute stores the first recorded timestamp of the performance metric.

- duration. This attribute stores the end-to-end duration of the event being recorded by the metric.

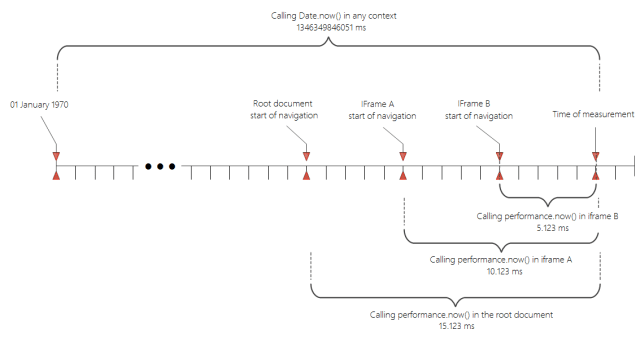

All of the timing data is recorded in high resolution time using the type DOMHighResTimeStamps, defined in the High Resolution Time specification. Unlike DOMTimeStamps which measure time values in milliseconds from 01 January, 1970 UTC, the high resolution time value is measured in at least microsecond resolution from the start of navigation of the document. For example, if I check the current time using performance.now(), the high resolution time analogous for Date.now(), I would get the following interpretation of the current time:

> performance.now();

4038.2319370044793

> Date.now()

1386797626201

This time value also has the benefit of not being impacted by clock skew or adjustments. You can explore the What Time Is It Test Drive to understand the use of high resolution time.

You can use the following interfaces to retrieve a list of the performance metrics recorded at the time of the call. Using the startTime and duration, as well as any other attributes provided by the metric, you can obtain an end-to-end timeline view of your page performance as your customers had experienced.

PerformanceEntryList getEntries();

PerformanceEntryList getEntriesByType(DOMString entryType);

PerformanceEntryList getEntriesByName(DOMString name, optional DOMString entryType);

The getEntries method returns a list of all of the performance metrics on the page, whereas the other methods return specific items based on the name or type. We expect most developers will just use JSON stringify on the entire list of metrics and send the results to their server for analysis rather than processing the information on the client.

Let’s take a closer look at each of the different performance metrics: navigation, resource, marks, and measures.

Navigation Timing

The Navigation Timing interfaces provide accurate timing measurements for each of the phases of navigating to your Web application. The Navigation Timing L1 specification has been published as a W3C Recommendation, with full support since IE9, Chrome 28, and Firefox 23. The Navigation Timing L2 specification is a First Public Working Draft and is supported by IE11.

With Navigation Timing, developers can not only get the accurate end-to-end page load time, including the time it takes to get the page from the server, but also get the breakdown of where that time was spent in each of the networking and DOM processing phases: unload, redirect, app cache, DNS, TCP, request, response, DOM processing, and the load event. The script below uses Navigation Timing L2 to get this detailed information. The entry type for this metric is “navigation,” while the name is “document.” Check out a demo of Navigation Timing on the IE Test Drive site.

<!DOCTYPE html>

<html>

<head></head>

<body>

<script>

functionsendNavigationTiming() {

var nt = performance.getEntriesByType(‘navigation’)[0];

var navigation = ‘Start Time: ‘ + nt.startTime;

navigation += ‘Duration: ‘ + nt.duration;

navigation += ‘Unload: ‘ + (nt.unloadEventEnd – nt.unloadEventStart);

navigation += ‘Redirect: ‘ + (nt.redirectEnd – nt.redirectStart);

navigation += ‘App Cache: ‘ + (nt. domainLookupStart – nt.fetchStart);

navigation += ‘DNS: ‘ + (nt.domainLookupEnd – nt.domainLookupStart);

navigation += ‘TCP: ‘ + (nt.connectEnd – nt.connectStart);

navigation += ‘Request: ‘ + (nt.responseStart – nt.requestStart);

navigation += ‘Response: ‘ + (nt.responseEnd – nt.responseStart);

navigation += ‘Processing: ‘ + (nt.domComplete – nt.domLoading);

navigation += ‘Load Event: ‘ + (nt.loadEventEnd – nt.loadEventStart);

sendAnalytics(navigation);

}

</script>

</body>

</html>

By looking at the detailed time spent in each of the network phases, you can better diagnose and fix your performance issues. For example, you may consider not using a redirection if you find redirect time is high, use a DNS caching service if DNS time is high, use a CDN closer to your users if request time is high, or GZip your content if response time is high. Check out this video for tips and tricks on improving your network performance.

The main difference between the two Navigation Timing specification versions is in how timing data is accessed and in how time is measured. The L1 interface defines these attributes under the performance.timing object and in milliseconds since 01 January, 1970. The L2 interface allows the same attributes to be retrieved using the Performance Timeline methods, enables them to be more easily placed in a timeline view, and records them with high resolution timers.

Prior to Navigation Timing, developers would commonly try to measure the page load performance by writing JavaScript in the head of the document, like the below code sample. Check out a demo of this technique on the IE Test Drive site.

<!DOCTYPE html>

<html>

<head>

<script>

var start = Date.now();

function sendPageLoad() {

var now = Date.now();

var latency = now – start;

sendAnalytics(‘Page Load Time: ‘ + latency);

}

</script>

</head>

<body onload=’sendPageLoad()’>

</body>

</html>

However, this technique does not accurately measure page load performance, because it does not include the time it takes to get the page from the server. Additionally, running JavaScript in the head of the document is generally a poor performance pattern.

Resource Timing

Resource Timing provides accurate timing information on fetching resources in the page. Similar to Navigation Timing, Resource Timing provides detailed timing information on the redirect, DNS, TCP, request, and response phases of the fetched resources. The Resource Timing specification has been published as a W3C Candidate Recommendation with support since IE10 and Chrome 30.

The following sample code uses the getEntriesByType method to obtain all resources initiated by the element. The entry type for resources is “resource,” and the name will be the resolved URL of the resource. Check out a demo of Resource Timing on the IE Test Drive site.

<!DOCTYPE html>

<html>

<head></head>

<body onload=’sendResourceTiming()’>

<img src=’http://some-server/image1.png’>

<img src=’http://some-server/image2.png’>

<script>

function sendResourceTiming()

{

var resourceList = window.performance.getEntriesByType(‘resource’);

for (i = 0; i < resourceList.length; i++)

{

if(resourceList[i].initiatorType == ‘img’)

{

sendAnalytics(‘Image Fetch Time: ‘ + resourceList[i].duration);

}

}

}

</script>

</body>

</html>

For security purposes, cross-origin resources only show their start time and duration; the detailed timing attributes are set to zero. This helps avoid issues of statistical fingerprinting, where someone can try to determine your membership in an organization by confirming whether a resource is in your cache by looking at the detailed network time. The cross origin server can send the timing-allow-origin HTTP header if it wants to share timing data with you.

User Timing

User Timing provides detailed timing information on the execution of scripts in your application, complementing Navigation Timing and Resource Timing which provide detailed network timing information. User Timing allows you to display your script timing information in the same timeline view as your network timing data to get an end to end understanding of your app performance. The User Timing specification has been published as a W3C Recommendation, with support since IE10 and Chrome 30.

The User Timing interface defines two metrics used to measure script timing: marks and measures. A mark represents a high resolution time stamp at a given point in time during your script execution. A measure represents the difference between two marks.

The following methods can be used to create marks and measures:

void mark(DOMString markName);

void measure(DOMString measureName, optional DOMString startMark, optional DOMString endMark);

Once you have added marks and measures to your script, you can retrieve the timing data by using the getEntry, getEntryByType, or getEntryByName methods. The mark entry type is “mark,” and the measure entry type is “measure.”

The following sample code uses the mark and measure methods to measure the amount of time it takes to execute the doTask1() and doTask2() methods. Check out a demo of User Timing on the IE Test Drive site.

<!DOCTYPE html>

<html>

<head></head>

<body onload=’doWork()’>

<script>

function doWork()

{

performance.mark(‘markStartTask1′);

doTask1();

performance.mark(‘markEndTask1′);

performance.mark(‘markStartTask2′);

doTask2();

performance.mark(‘markEndTask2′);

performance.measure(‘measureTask1′, ‘markStartTask1′, ‘markEndTask1′);

performance.measure(‘measureTask2′, ‘markStartTask2′, ‘markEndTask2′);

sendUserTiming(performance.getEntries());

}

</script>

</body>

</html>

We want to thank everyone in the W3C Web Performance Working Group for helping design these interfaces and browsers vendors for working on implementing this interface with an eye towards interoperability. With these interfaces, Web developers can truly start to measure and understand what they can do to improve the performance of their applications.

Try out the performance measurement interfaces with your Web apps in IE11, and as always, we look forward to your feedback via Connect.

Thanks,

Jatinder Mann, Internet Explorer Program Manager